Everyone’s talking about AI research & ReOps workflows

Five minutes on LinkedIn or Reddit, our recent exploration of UXR and ReOps in an era of AI and automation, or attending our Use of AI Tools in Daily Research & ReOps Work webinar confirms: the conversation around AI is well beyond early skepticism.

As Peter Merholz, whose work bridges technology and humanism, pointed out during the webinar, the question is no longer if AI belongs in research, but where it helps and where it risks undermining the work:

It’s ironic that AI might help us scale empathy in research, but we still haven’t figured out how to scale consent.That shift reframes our approach toward more nuance, accountability, and systems thinking, asking:

How is AI changing the way research gets done?

Which use cases make sense?

Where do we need guardrails?

Where is human judgment still non-negotiable?

Webinar recap

Ethnio designed The Use of AI Tools in Daily Research & ReOps Work session to move this conversation out of the digital ether into daily practice.

Hosted by our CEO, Nate Bolt—a pioneer in research operations and creator of the first user research CRM, Ethnio. Before founding Ethnio, Nate led the UXR agency Bolt | Peters, which was acqui-hired by Meta, where he later served as a Research Manager and briefly worked alongside our guest speaker:

Pete Fleming, Head of UX Research @ YouTube

Pete leads UXR for YouTube Ads at Google and formerly headed research at Gemini. With prior experience at Instagram and Facebook, and civic service, he brings a multi-dimensional perspective on AI’s role in UXR.

We want AI to help us do the parts of our job we don’t like—but more importantly, it should help us do more of the good parts.This recap distills key insights and presents a practical roadmap for integrating AI thoughtfully across the research lifecycle.

Key takeaways from the webinar

Pete shared how AI is reshaping workflows, emphasizing:

AI works best when it helps humans do more of what matters.

Your research lifecycle is your AI roadmap.

Synthetic participants’ ≠ real insight (yet).

Don’t fear automation—use it to scale human connection.

Stay close to the raw data or risk losing the nuance.

Why we need a study lifecycle lens

Research studies begin with objectives, stakeholder intake, and planning, and quickly expand to include product, design, engineering, and leadership. Across these roles, everyone’s asking the same question as Human Centered Design Researcher, Abbey Ripstra:

I’m curious about where AI works—and doesn’t work—in research. Are there phases where AI is especially effective or ineffective? Pete Fleming’s advice?

Stop debating AI in the abstract. Instead, evaluate AI through the lens of the research lifecycle.

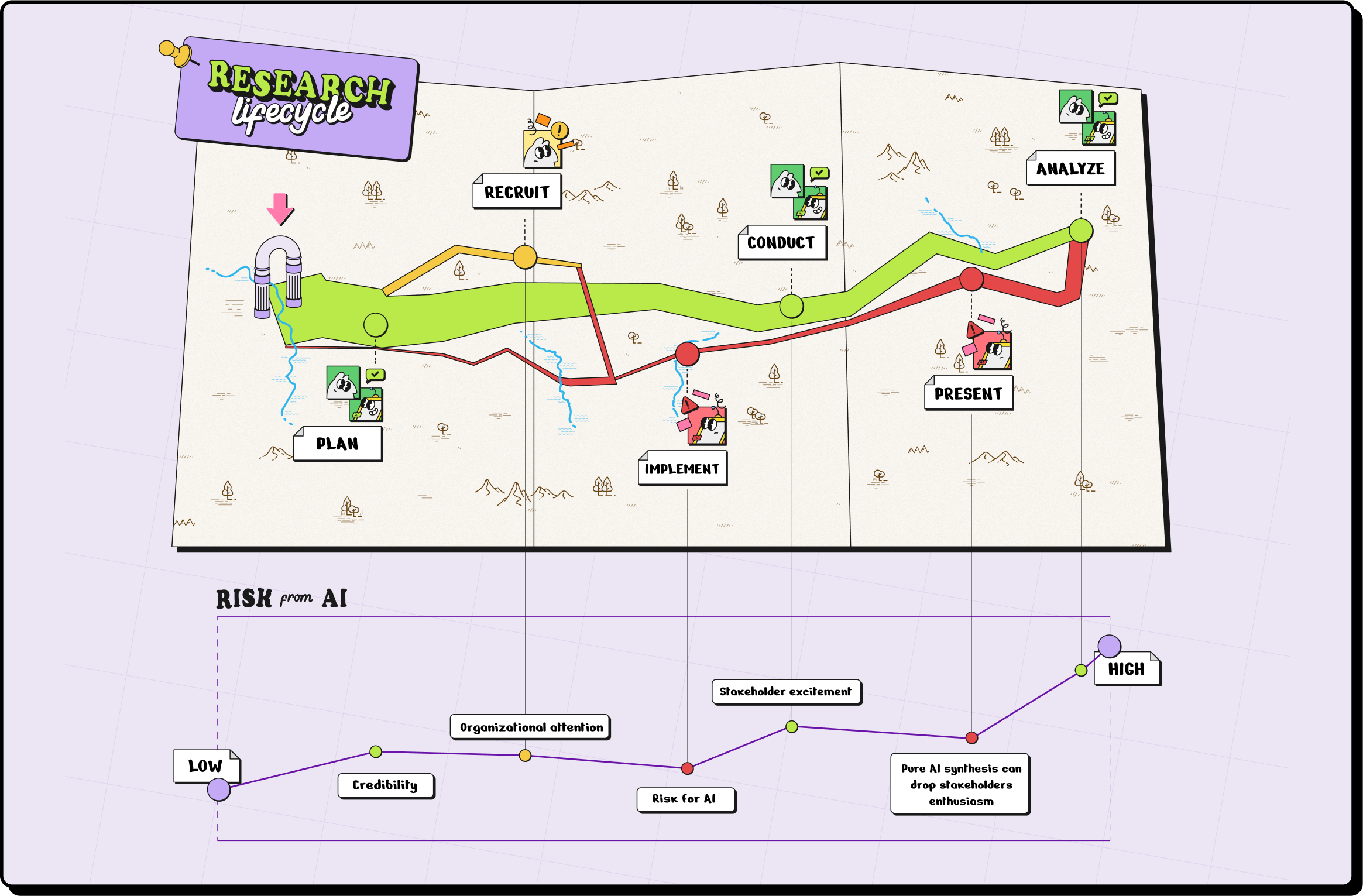

Most studies follow a similar arc. While research practices vary by org, the core lifecycle typically includes five phases:

Planning

Intake & Recruitment

Execution (Data Collection)

Analysis & Synthesis

Presentation & Impact

Mapping AI to each stage—from recruitment through analysis and synthesis—makes the opportunities and trade-offs clear and actionable.

Stage-by-stage AI adoption across the research lifecycle

Not all phases carry equal risk or opportunity. Some stages are ideal for AI augmentation. Others require strict governance and human judgment to maintain research quality and ethics.

This stage-based framework helps teams identify:

Where AI adds value

Where human oversight is essential

How to adopt AI iteratively

Together, they tell the story of how AI can empower—or derail—your research practice. Let’s walk through each of the five stages in more detail:

Stage 1: Study Planning (Low Risk, High Reward)

Pete emphasized that early-stage research planning is a low-risk, high-adoption area for AI. Planning is where experimentation with AI’s capabilities begins, and where many researchers feel comfortable experimenting with AI tools, like Gemini or ChatGPT, to save time on:

Drafting study plans

Brainstorming research questions

Polishing research goals

While the measurable impact might be modest, the main benefit is accelerating work you’d otherwise put off. AI acts as a helpful assistant, not a replacement, during planning.

Stage 2: Study Intake & Participant Recruitment (Medium Risk, High Reward)

When moving to study intake and recruitment, AI can reduce manual load by automating tasks like:

Summarizing stakeholder intake

Prioritizing study requests

Sorting or tagging participant lists

But Pete emphasizes that this phase also introduces heightened risk due to the handling of sensitive participant data. Kim Maes, Operations & People Leader at Meta, echoed the concern:

Talking about risk: user data is often a sensitive topic. How are you making sure AI models handle user data ethically and securely?Data privacy is critical. Integrating AI at this point requires privacy-first tools and enterprise-grade security protocols. Pete shared how early automation—like Ethnio pop-ups at Facebook—scaled participant outreach without compromising human oversight and connection.

Pro tip:

If you’re integrating AI into intake or recruitment workflows, make sure your tools meet enterprise security standards. Choose privacy-first platforms, like Ethnio, which prioritize compliance and consent, offering SOC 2 certification, GDPR/CCPA controls, and HIPAA readiness.

Stage 3: Study Execution (Medium to High Risk)

During execution, automation and AI can help:

Coordinate logistics

Schedule sessions

Run tech checks

Free researchers to focus on human-to-human interactions

Pete cautioned against overreliance on AI moderators or simulated participants. While these tools can assist in scaling studies or serve as training aids for new moderators, they cannot replace the empathy and nuance of real participant interactions.

Human connection remains central to UXR’s value.

We want to scale qualitative research, but not at the cost of empathy.

Stage 4: Synthesis & Analysis (High Risk, High Controversy)

AI is already transforming how researchers analyze qualitative data, through auto-coding transcripts or summarizing findings.

I’ve started training junior researchers using synthetic data sets. It’s less risky and a great way to practice analysis before the real thing.Pete encourages using AI to assist with synthesis while maintaining human oversight. The future may include databases where transcripts, videos, and tags are searchable, with AI surfacing themes without replacing critical human judgment.

But Pete cautions to stay close to raw data to catch errors and understand context. Overreliance on AI summaries can reinforce bias or lose nuance.

Synthetic participants are a cool idea in theory, but the risk is we start testing our ideas on ourselves, because the models just reflect back our own assumptions.

Stage 5: Presentation & Impact (Medium Risk, Underrated Opportunity)

This stage is an underrated opportunity for AI to add value, not by replacing researchers, but by amplifying their influence and impact.

I’d love to see LLMs used to track whether research insights are picked up later in strategy documents. That’s how you show real impact.AI can assist by helping researchers practice presentations (e.g., through simulated audiences) and by tracking how insights propagate into strategy documents. AI tools can support not only external storytelling but also internal communication. As Krista Hilton notes:

Sometimes I use LLMs to rephrase stakeholder feedback before sharing it with the team—making it easier to absorb and act on, without diluting the core messageReinforcing the webinar’s central theme: AI should amplify, not diminish, the human side of research—especially when influencing decisions, persuading stakeholders and driving change.

How to apply this framework

Pete closed with a clear 4-step approach:

Map your research lifecycle

For each stage, ask:

What’s the risk level of using AI?

What’s the value add?

What safeguards (PII/IP protection) are needed?

Don’t generalize—use nuance for each task.

Keep humans close to the robots.

Pro tip:

Early stages = best place for efficiency gains.

Later stages = higher risk, higher need for human judgment.

What not to do with AI

❌ Don’t plug in participant data without knowing if it’s training a model.

❌ Don’t assume “more AI” means “more insight.”

❌ Don’t let efficiency overshadow empathy.

Underrated webinar insights worth sharing

Human connection is ResearchOps’ superpower: Use AI to create more, not less.

Synthetic data may expose demographic over-reliance: Gemini’s “digital twins” show that behavior often trumps identity.

Presentation is the midpoint, not the end: What happens after is what drives real impact.

AI helps junior researchers punch above their weight: Equal access = creative leverage.

TL;DR

✅ Planning is the easiest entry point—high adoption, low risk.

⚠️ Panel management and synthetic participants are where ethical risks spike, requiring governance and clear opt-out policies.

As Lead UX Researcher at Square, Joanna V, reminds us:

Real people are messy and inconsistent and amazing. I want AI to help me reach more of them—not replace them.🚧 Analysis offers huge time savings, but don’t lose touch with raw data.

💡 Use the presentation phase to amplify insights, not replace influence.

Want to dive deeper into the lifecycle framework?

Think Charles Minard’s famous map of Napoléon’s march, but for user research. 👀

Stay tuned for our upcoming piece, which draws a comparison between the research lifecycle as an AI roadmap and Minard’s iconic map—a compelling metaphor for tracking loss, progress, and strategy in complex systems. Visualizing workflows and tools to help teams design strategic, iterative AI adoption.

This framework will help teams better understand how AI fits their unique context, avoid blind spots, and continuously optimize for impact.

Don’t miss future events!

Feeling FOMO after reading about our latest webinar? Stay up to date via LinkedIn or our Events page.

👋 What's Ethnio?

Ethnio is a Research Operations Platform that removes the pain of recruitment and study management to scale research programs confidently without compromising on quality or operational control.

As AI reshapes workflows, Ethnio empowers you to integrate automation strategically, freeing your team to focus on insights that accelerate smarter product decisions.